Introduction: When Technology Feels Too Close to Home

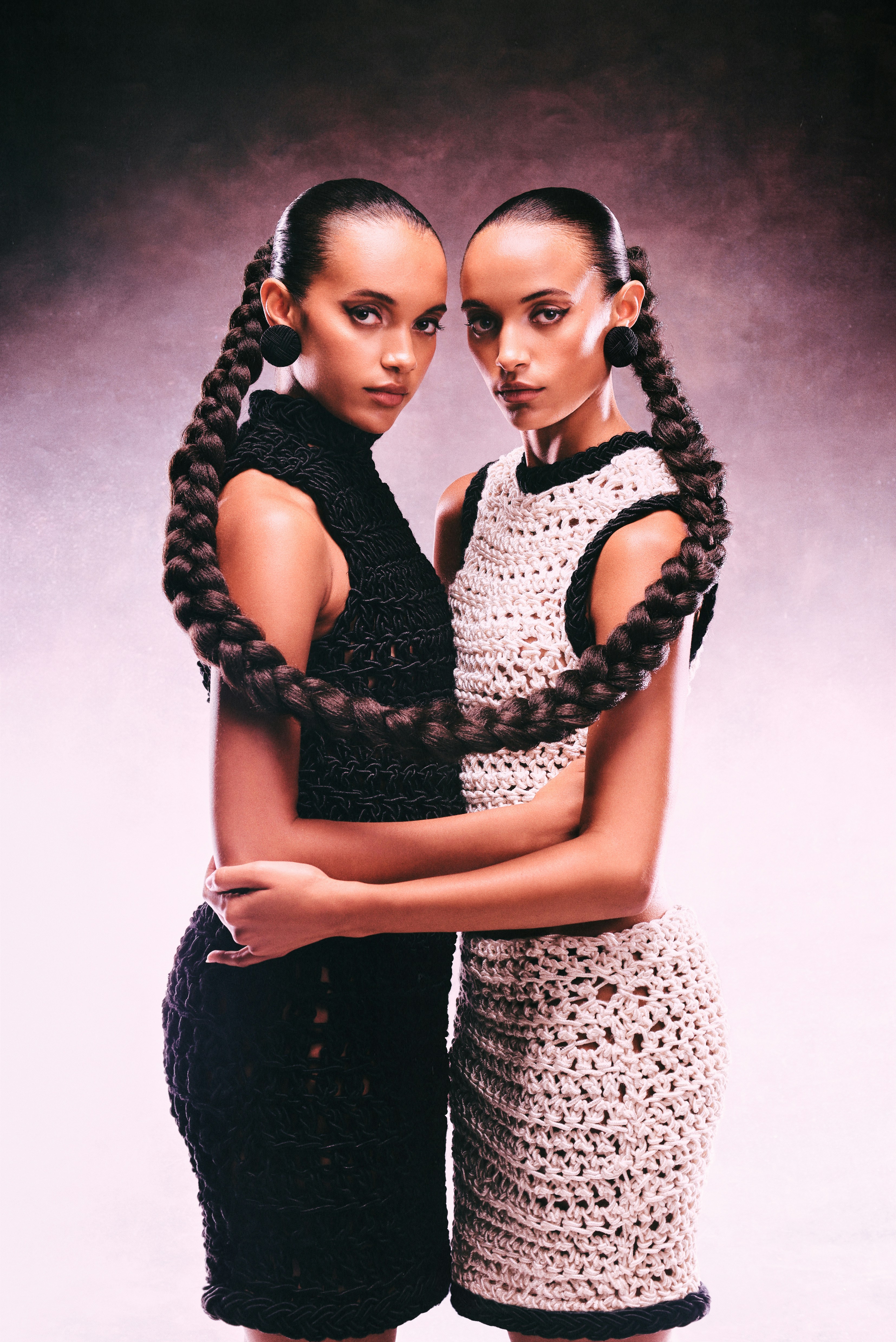

Picture this: you open your email and discover a message that sounds exactly like you wrote it—except you didn’t. Or you hear an AI-generated voice note, and it mimics not only your words but also your laughter, pauses, and subtle tones. These experiences are no longer futuristic scenarios—they are happening right now in what experts call the era of the digital doppelgänger.

A digital doppelgänger is not just another AI tool. It is a mirror, a simulation that reflects back elements of your personality, choices, and communication style, creating both wonder and unease. On one hand, people marvel at how “intelligent” technology has become. On the other hand, many feel disoriented, even threatened, when a machine can echo their uniqueness.

The rise of digital doppelgängers challenges fundamental aspects of human psychology: identity, agency, creativity, and trust. If machines can replicate us convincingly, where does the authentic self begin and end?

This article brings together insights from psychology, neuroscience, social psychology, and human-computer interaction. We’ll explore what digital doppelgängers are, why they feel so persuasive, the risks and benefits they pose, and practical strategies to stay grounded. Finally, we’ll walk through step-by-step methods to preserve your sense of self in a world where AI might look, sound, and even think like you.

1. What is a Digital Doppelgänger?

A digital doppelgänger is an AI-generated version of a person, designed to mimic speech, appearance, behaviors, or decision-making. Unlike simple chatbots or voice assistants, digital doppelgängers feel personal because they use your data—your texts, photos, emails, or social media behavior—to construct a replica.

They can take many forms:

-

Voice clones: AI models that learn tone, rhythm, and vocal nuances (e.g., synthetic recordings for advertisements).

-

Text mimics: Systems that replicate your writing style, sentence structure, and vocabulary, making emails or posts indistinguishable from your own.

-

Visual avatars and deepfakes: AI-generated videos where your likeness is placed in another context, sometimes for entertainment, but increasingly for misinformation.

-

Behavioral models: Predictive algorithms that learn your shopping choices, music tastes, or even political opinions.

Why This Matters

The creation of digital doppelgängers forces us to ask:

-

Who owns your digital identity?

-

What happens if others manipulate or misuse your likeness?

-

How does seeing a near-perfect version of yourself change your understanding of who you are?

These are not just legal questions—they are deeply psychological ones about selfhood, authenticity, and control.

2. Why AI Doppelgängers Feel So Real: The Psychology

2.1 The CASA Paradigm (Computers Are Social Actors)

Reeves & Nass (1996) demonstrated that humans treat machines socially; even when we consciously know they are “just” code. When AI mimics us, our brains unconsciously respond as if it were a living reflection. That’s why a digital version of yourself feels eerily alive, even though you know it’s artificial.

Example: Talking to a chatbot in your own voice can feel like talking to a sibling, even if you know logically it’s machine-generated.

2.2 Social Comparison Theory

Festinger’s (1954) theory shows that humans evaluate themselves by comparing with others. When the “other” is an AI copy of you—more polished, faster, or more persuasive; the comparison can feel threatening. Instead of competing with colleagues, you’re competing with yourself… or at least, your digital twin.

Example: A student sees an AI tool generate an essay in her writing style within seconds. Instead of feeling relieved, she feels insecure—“Am I really that replaceable?”

2.3 The Proteus Effect

Yee & Bailenson (2007) found that avatars influence real-world behavior. If your AI doppelgänger presents you as charismatic or confident, you might internalize those traits. Conversely, if it exaggerates your flaws, it can damage your self-perception.

Example: An AI avatar of a shy employee is portrayed as timid in a training simulation. The employee starts believing that’s “who they are,” reinforcing insecurity rather than growth.

2.4 The Uncanny Valley

When AI replicas look or sound almost human but not quite, people experience discomfort (Mori et al., 2012). This “almost-real” quality of doppelgängers explains why deepfakes or AI-cloned voices often feel creepy rather than impressive.

Example: A mother hears her son’s voice cloned by scammers asking for money. Though she recognizes something “off,” the uncanny closeness tricks her emotions.

2.5 The Extended Mind Hypothesis

Clark & Chalmers (1998) argue that our tools become part of our cognitive system. If AI can anticipate your decisions, complete your sentences, and mirror your preferences, the line between “self” and “technology” blurs. This blurring can be empowering; or destabilizing, depending on how we engage with it.

3. Psychological Risks of Digital Doppelgängers

-

Erosion of Agency

Reliance on AI to predict and decide weakens self-confidence. People may think: “If AI knows me so well, why should I bother?” (Carr, 2011). -

Identity Confusion

When AI behaves “as you” but in ways you wouldn’t, it creates cognitive dissonance. Sherry Turkle (2011) describes how interacting with digital replicas can destabilize identity and increase alienation. -

Creativity Suppression

Amabile (1996) found that intrinsic motivation fuels creativity. If AI can produce art, music, or writing faster than you, you might stop creating—believing your originality no longer matters. -

Privacy and Exploitation

Data-driven doppelgängers can be hacked, misused, or weaponized, raising risks not only to identity but also to safety (Vaccari & Chadwick, 2020). -

Emotional Manipulation

If an AI twin can speak like you, it can also be used to deceive others—impacting relationships, reputation, and trust.

4. Benefits Worth Keeping

Not all digital twins are harmful. Properly used, they can:

-

Therapy Simulations: Psychologists use AI avatars for exposure therapy, role-playing, or practicing conversations.

-

Skill Development: AI speech coaches can replicate your style, offering feedback on tone, clarity, and pacing.

-

Productivity Boost: Drafting documents or responding to emails in your voice saves time.

-

Self-Reflection: Seeing your digital reflection can reveal blind spots—like noticing you speak too quickly or dominate conversations.

The challenge is balance: using AI as a mirror, not a master.

6. Step-by-Step Guide: Staying Grounded Amid Digital Mirrors

Step 1: Awareness and Education

What to Do: Learn how digital doppelgängers are made—through data aggregation, voice cloning, deepfakes, or predictive algorithms.

Why It Works: Fear often grows in the dark. As Turkle (2011) highlights, when people don’t understand technology, they project uncertainty and anxiety onto it. Education empowers you to see the mechanics behind the illusion.

Example: Reading about how voice AI works turns a “scary” voice clone into what it truly is: a machine learning pattern generator—not your soul trapped in a file.

Step 2: Emotional Check-Ins

What to Do: Pause when you encounter an AI mimic of yourself. Ask: “What emotions are arising?” Is it excitement? Curiosity? Fear? Jealousy? Write them down or say them aloud.

Why It Works: Gross (2015) shows that naming emotions reduces their intensity, a process known as “affect labeling.” It interrupts spirals of fear or shame and helps regulate the nervous system.

Example: Seeing an AI chatbot mimic your writing may trigger envy (“It’s better than me”) or pride (“It sounds like me!”). By naming it, you take back control from the reaction.

Step 3: Separate “Self” from “Simulation”

What to Do: Remind yourself: “This is a digital mirror made from data—it is not me.”

Why It Works: AI models lack lived experience, consciousness, or emotional context. By cognitively reframing, you prevent identity fusion, where people feel merged with their online avatars (Gonzales & Hancock, 2011).

Example: If an AI misrepresents you in tone or exaggerates quirks, view it as a distorted reflection, not a threat to your authentic self.

Step 4: Strengthen Somatic Awareness

What to Do: Tune into your body when AI triggers unease. Practice diaphragmatic breathing, progressive muscle relaxation (Jacobson, 1938), or grounding through posture and touch (Porges, 2011).

Why It Works: Anxiety about identity is felt physiologically: racing heart, shallow breath, tight jaw. Somatic awareness interrupts the cycle by calming the vagus nerve and restoring safety cues.

Example: Before watching a deepfake of yourself, place both feet on the ground, take five belly breaths, and relax your shoulders.

Step 5: Journal Reflections

What to Do: After encountering AI versions of yourself, journal about the experience. What did you notice? What emotions arose? How did you respond?

Why It Works: Pennebaker (1997) found expressive writing helps integrate difficult experiences, reduce stress, and clarify self-concept. Journaling externalizes fears, preventing them from festering.

Example: “I felt anxious when I heard my AI voice clone. But I realized my friends could tell the difference. That gave me relief.”

Step 6: Limit Overexposure

What to Do: Set healthy boundaries around AI use. Balance digital time with grounding activities like art, journaling, movement, or spending time in nature.

Why It Works: Overexposure intensifies desensitization and detachment, while time offline restores perspective. Nature exposure specifically reduces stress and restores attentional balance (Kaplan, 1995).

Example: After experimenting with AI for work, spend an evening phone-free, sketching or walking outdoors. This anchors you back in embodied reality.

Step 7: Reconnect with Values

What to Do: When AI confuses your sense of self, ask: “What really matters to me?” Then act on that value.

Why It Works: Acceptance and Commitment Therapy (Hayes et al., 1999) emphasizes values-driven action to counter avoidance and identity diffusion. By focusing on your values—family, creativity, growth—you anchor identity in lived choices, not digital reflections.

Example: If an AI writes in your style, reclaim agency by creating original work aligned with your personal mission, like supporting mental health advocacy.

Step 8: Use Doppelgängers as Tools, Not Authorities

What to Do: Let AI assist you but never replace your judgment. Treat it like a paintbrush, not a painter.

Why It Works: Research in human-computer interaction warns against over-reliance on AI, which can reduce confidence in human decision-making (Carr, 2011). By setting boundaries, you remain the author of your choices.

Example: Use an AI draft for a blog post but ensure the final edits reflect your authentic voice and perspective.

Step 9: Create Rituals of Authenticity

What to Do: Establish daily practices that remind you of your uniqueness: affirmations, morning mindfulness, unplugged evenings, or rituals of gratitude.

Why It Works: Rituals anchor identity, reinforcing continuity of self even amid technological change (Hobson et al., 2018). They cultivate psychological safety and prevent identity erosion.

Example: Begin your day by journaling one thing only you can uniquely bring to the world—your lived experiences.

Step 10: Seek Support if Distress Persists

What to Do: If AI-triggered anxiety or identity confusion grows overwhelming, seek therapy, coaching, or supportive peer groups.

Why It Works: Professional support helps process identity challenges, set healthy boundaries with technology, and prevent anxiety escalation. Cognitive-behavioral and existential therapies are especially helpful (Beck, 2011; Yalom, 1980).

Example: Sharing with a therapist: “I feel uneasy when AI mimics me. It makes me question my worth.” This externalizes the fear and allows structured coping strategies.

7. Preparing for the Future: Resilient Digital Selves

As AI advances, doppelgängers will become more realistic. Schools, workplaces, and families must foster digital resilience: the ability to engage critically with AI without losing one’s sense of self. This includes:

-

Digital literacy programs

-

Ethical boundaries on AI replication

-

Personal boundaries with technology use

Conclusion: You Are More Than Your Digital Reflection

AI may replicate your style, your speech, even your choices; but it cannot replicate your lived experiences, emotions, or consciousness. By cultivating awareness, grounding practices, and intentional boundaries, you can harness the benefits of digital doppelgängers without losing yourself in the mirror.

Your digital reflection is just that: a reflection. The real you is deeper, richer, and irreplaceable.

References

- Amabile, T. M. (1996). Creativity in context. Westview Press.

- American Psychiatric Association. (2013). Diagnostic and statistical manual of mental disorders (5th ed.).

- Bandura, A. (1982). Self-efficacy mechanism in human agency. American Psychologist, 37(2), 122–147.

- Beck, J. S. (2011). Cognitive behavior therapy: Basics and beyond (2nd ed.). Guilford Press.

- Carr, N. (2011). The shallows: What the Internet is doing to our brains. W. W. Norton.

- Clark, A., & Chalmers, D. (1998). The extended mind. Analysis, 58(1), 7–19.

- Festinger, L. (1954). A theory of social comparison processes. Human Relations, 7, 117–140.

- Gilbert, P. (2001). Evolutionary approaches to psychopathology. British Journal of Medical Psychology, 74(4), 353–395.

- Gonzales, A. L., & Hancock, J. T. (2011). Mirror, mirror on my Facebook wall: Effects of exposure to Facebook on self-esteem. Cyberpsychology, Behavior, and Social Networking, 14(1–2), 79–83.

- Gross, J. J. (2015). Emotion regulation: Current status and future prospects. Psychological Inquiry, 26(1), 1–26.

- Hayes, S. C., Strosahl, K. D., & Wilson, K. G. (1999). Acceptance and commitment therapy: An experiential approach to behavior change. Guilford Press.

- Hobson, N. M., Schroeder, J., Risen, J. L., Xygalatas, D., & Inzlicht, M. (2018). The psychology of rituals: An integrative review and process-based framework. Personality and Social Psychology Review, 22(3), 260–284.

- Jacobson, E. (1938). Progressive relaxation. University of Chicago Press.

- Kaplan, S. (1995). The restorative benefits of nature: Toward an integrative framework. Journal of Environmental Psychology, 15(3), 169–182. https://doi.org/10.1016/0272-4944(95)90001-2

- LeDoux, J. (2000). Emotion circuits in the brain. Annual Review of Neuroscience, 23, 155–184.

- Mori, M., MacDorman, K. F., & Kageki, N. (2012). The uncanny valley. IEEE Robotics & Automation Magazine, 19(2), 98–100.

- Pennebaker, J. W. (1997). Writing about emotional experiences as a therapeutic process. Psychological Science, 8(3), 162–166.

- Porges, S. W. (2011). The polyvagal theory: Neurophysiological foundations of emotions, attachment, communication, and self-regulation. W. W. Norton.

- Reeves, B., & Nass, C. (1996). The media equation. Cambridge University Press.

- Sapolsky, R. M. (2004). Why zebras don’t get ulcers. Holt Paperbacks.

- Turkle, S. (2011). Alone together: Why we expect more from technology and less from each other. Basic Books.

- Vaccari, C., & Chadwick, A. (2020). Deepfakes and disinformation. Social Media + Society, 6(1), 1–13.

- Yalom, I. D. (1980). Existential psychotherapy. Basic Books.

- Yee, N., & Bailenson, J. (2007). The Proteus effect. Human Communication Research, 33(3), 271–290.

Leave a Reply